- AQH Weekly Deep Dive

- Posts

- High Performance Monte Carlo Simulation - Sobol Sequences, Brownian Bridges and the Monte Carlo Edge

High Performance Monte Carlo Simulation - Sobol Sequences, Brownian Bridges and the Monte Carlo Edge

AlgoQuantHub Weekly Deep Dive

Welcome to the Deep Dive!

Here each week on ‘The Deep Dive’ we take a close look at cutting-edge topics on algo trading and quant research.

This week, we explore cutting-edge Monte Carlo techniques that form the backbone of modern quantitative finance, from exotic derivatives pricing to XVA exposure and large-scale risk aggregation. While traditional Monte Carlo relies on pseudo-random numbers and converges slowly at O(N−1/2), Sobol sequences—rooted in the van der Corput construction—bring deterministic structure to the simulation, and when paired with dimension-reduction strategies like Brownian Bridge construction, they deliver substantial variance reduction and computational efficiency.

Bonus content, here we show how high-dimensional Monte Carlo simulations gain a transformative edge by concentrating variance in the earliest dimensions. Readers will also find practical C++ source code, demonstrating how to implement these techniques efficiently using recursive generation of Sobol points, for an immediate edge in real-world pricing engines.

Table of Contents

Feature Article: The Monte Carlo Edge - From van der Corput to Sobol Sequences

Monte Carlo simulation is the backbone of modern quantitative finance. From pricing complex derivatives to XVA exposure and enterprise-scale risk aggregation, it powers the decisions and risk management of the world’s most sophisticated institutions.

While traditional Monte Carlo relies on pseudo-random numbers and converges slowly, Sobol sequences—rooted in the elegant van der Corput construction—replace randomness with deterministic structure. When paired with dimension-reduction strategies such as Brownian Bridge construction, they dramatically reduce variance and computational cost, giving practitioners a measurable edge in high-performance pricing engines.

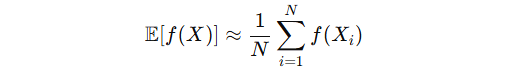

Monte Carlo integration approximates expectations as:

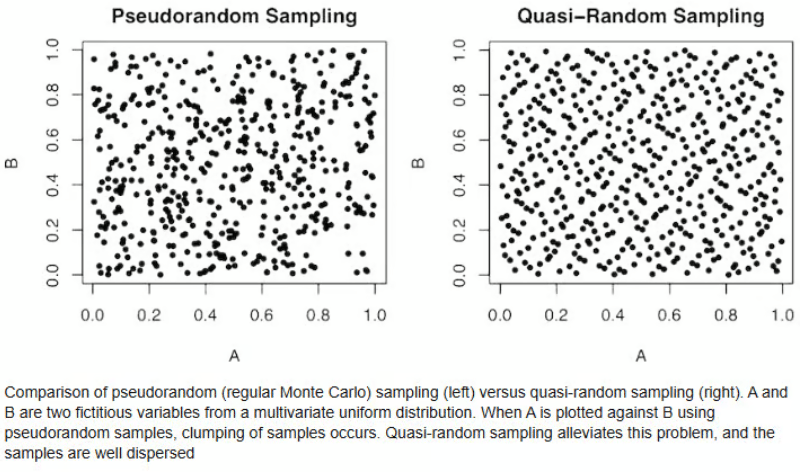

Pseudo-random sampling achieves convergence at a modest O(N−1/2) rate. Quasi-Random or Low-discrepancy sequences, on the other hand, are engineered to minimise discrepancy, the deviation between the empirical distribution of sample points and a perfectly uniform distribution.

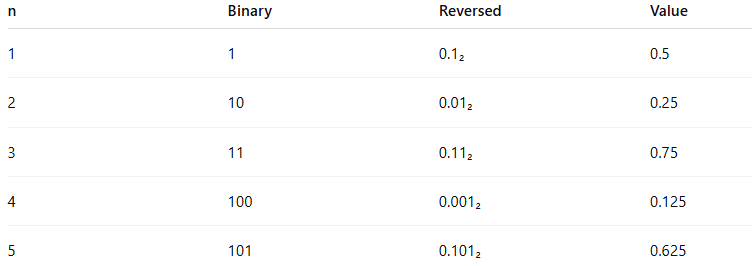

The one-dimensional Sobol sequence is founded on the van der Corput sequence. Its construction in base 2 is straightforward yet powerful:

Write the integer n in binary.

Reverse the digits.

Interpret the result as a fraction in [0,1).

The first five points in the Sobol random number sequence illustrate the structure:

Sobol Sequence

Notice the elegance: the sequence begins at 1/2 and recursively fills the largest remaining gaps. Each additional binary digit contributes a fraction of 1/2k , creating uniform coverage without clustering.

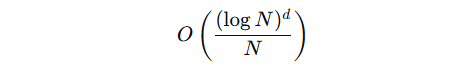

Sobol sequences generalise this approach to higher dimensions with carefully chosen direction numbers, leading to an asymptotic integration error of:

Even in moderately high dimensions, this outperforms pseudo-random Monte Carlo, allowing professionals to achieve high accuracy with fewer paths—an essential advantage in both trading and risk management.

Keywords: Sobol sequence, van der Corput sequence, low discrepancy sequence, quasi-random Monte Carlo, numerical integration, Monte Carlo convergence, deterministic sampling, star discrepancy, quantitative finance simulation

Recommended Reading

Monte Carlo Methods in Financial Engineering by Paul Glasserman

Monte Carlo Methods in Finance by Peter Jackel

Bonus Article: Sobol Brownian Bridge: A Monte Carlo Superpower

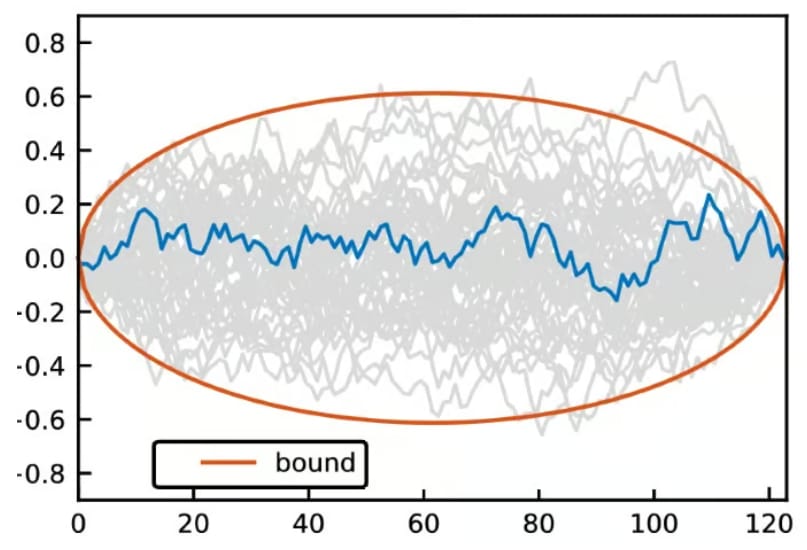

High-dimensional Monte Carlo is routine in derivatives pricing. Path-dependent products often require hundreds of time steps, each consuming one dimension of the low-discrepancy sequence.

Sobol sequences exhibit superior uniformity in early dimensions. If variance is concentrated in these coordinates—through Brownian Bridge construction or factor reordering—the simulation converges far faster.

Sobol Brownian Bridge

Brownian Bridge Construction: Rather than simulating sequential increments, the terminal value is sampled first, then intermediate points recursively filled. This concentrates variance in early Sobol coordinates and reduces the effective dimension, dramatically improving efficiency. For more information on Sobol Brownian Bridge click-here.

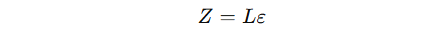

Correlated Assets and Cholesky Decomposition:

For multi-asset simulations we compute correlated random numbers Z as follows,

with L the Cholesky factor. Generate Sobol points on [0,1]d , map to standard normals via the inverse Gaussian CDF, and multiply by L. While Sobol sequences combined with Cholesky already reduce variance, further gains are possible with PCA decomposition or variance-aligned factor reordering. For detailed information on Cholesky Decomposition click here.

Readers will also find ready-to-use C++ source code in Appendix 1 below demonstrating 1D Sobol point generation. The implementation is memory-efficient, streaming points on-the-fly, so you don’t need to store the entire sequence—perfect for high-performance Monte Carlo engines.

Keywords: Sobol Brownian bridge, quasi-random Monte Carlo derivatives, effective dimension Monte Carlo, Cholesky decomposition Monte Carlo, PCA simulation, variance reduction techniques, correlated Gaussian simulation, XVA Monte Carlo optimisation

Appendix 1

C++ Implementation: Sobol 1D Generator

This generator produces high-quality Sobol points sequentially, without storing previous outputs, making it perfect for large-scale Monte Carlo engines. Each call to next() updates internal state efficiently, allowing deterministic quasi-random sampling on the fly.

The implementation below provides a lightweight and production-ready 1D Sobol generator designed for high-performance Monte Carlo applications. It supports both sequential generation via a fast Gray-code recurrence (next()) and direct indexed access (value(n)), while avoiding storage of the full sequence. The generator maintains only the current index and internal state, allowing deterministic, memory-efficient, on-the-fly quasi-random number generation suitable for large-scale simulation engines.

#include <cstdint>

#include <vector>

/*

Sobol1D

--------

A 1-dimensional Sobol quasi-random number generator.

Key properties:

- Deterministic low-discrepancy sequence

- O(1) generation per point

- No storage of previous random numbers required

- Each call to next() computes the next point directly

using Gray code structure and bitwise operations

This implementation assumes 64-bit precision and

returns doubles in [0,1).

*/

class Sobol1D {

public:

Sobol1D() : index_(0), x_(0) {

initializeDirectionNumbers();

}

/*

Generate the next Sobol number in [0,1).

The algorithm:

1. Increment the sequence index.

2. Determine the position of the rightmost zero bit

(via counting trailing zeros).

3. XOR the current integer state with the corresponding

direction number.

4. Scale to [0,1) using 2^-64.

*/

double next() {

// Determine position of rightmost zero bit of index_

// This corresponds to the direction number to apply.

uint64_t c = __builtin_ctzll(~index_);

// Update integer state using XOR with direction number

x_ ^= direction_numbers_[c];

// Move to next index

++index_;

// Convert 64-bit integer to double in [0,1)

return static_cast<double>(x_) * normalization_;

}

private:

uint64_t index_; // Current sequence index

uint64_t x_; // Current integer state

std::vector<uint64_t> direction_numbers_; // Precomputed direction numbers

static constexpr double normalization_ = 1.0 / static_cast<double>(1ULL << 63) / 2.0;

/*

Initialize direction numbers.

For 1D Sobol, direction numbers are powers of two,

shifted to fill the 64-bit range.

v[i] = 1 << (62 - i)

The highest bit used is 62 (not 63), ensuring correct

alignment with double precision mantissa usage.

*/

void initializeDirectionNumbers() {

direction_numbers_.resize(64);

for (int i = 0; i < 64; ++i) {

direction_numbers_[i] = 1ULL << (62 - i);

}

}

};Note that we do not need to store previous random numbers.

Sobol generation works by maintaining only:

The current index

The current integer state

x_

Each new point is computed by XOR-ing with a single direction number determined by the Gray-code structure of the index. We do not need the full history of generated values. The recurrence is local and self-contained.

This means:

• No vector of pre-generated samples

• No memory overhead

• No dependency on earlier stored values

• Fully incremental generation

You can call next() on the fly inside a Monte Carlo loop, pricing engine, or Brownian bridge construction, and it will deterministically produce the next quasi-random point.

That property — O(1) generation with zero storage — is one of the elegant reasons Sobol sequences scale so beautifully in high-performance simulation engines.

Example: How to use the Sobol 1D Generator

Below is a clean, professional usage example showing how to:

Generate Sobol numbers sequentially

Access arbitrary indices directly

Compute mean and variance

Use the generator inside a simple Monte Carlo integration

This demonstrates exactly how you would integrate the class into a pricing engine or research prototype.

#include <iostream>

#include <cmath>

// Assume Sobol1D class definition is included above

int main() {

// ----------------------------------------

// 1. Sequential Generation

// ----------------------------------------

Sobol1D sobol;

std::cout << "First 5 Sobol points:\n";

for (int i = 0; i < 5; ++i) {

double u = sobol.next();

std::cout << u << "\n";

}

// ----------------------------------------

// 2. Direct Indexed Access

// ----------------------------------------

std::cout << "\nValue at index 1000:\n";

double u1000 = sobol.value(1000);

std::cout << u1000 << "\n";

// ----------------------------------------

// 3. Monte Carlo Integration Example

// Estimate ∫₀¹ x² dx = 1/3

// ----------------------------------------

const uint64_t N = 100000;

sobol.reset(); // restart sequence

double sum = 0.0;

for (uint64_t i = 0; i < N; ++i) {

double u = sobol.next();

sum += u * u;

}

double estimate = sum / static_cast<double>(N);

std::cout << "\nEstimate of ∫ x^2 dx:\n";

std::cout << "Estimate: " << estimate << "\n";

std::cout << "Exact: " << 1.0/3.0 << "\n";

return 0;

}What This Demonstrates

next()streams quasi-random numbers efficiently without storing the sequence.value(n)allows direct deterministic access to the nth point.Resetting ensures reproducibility.

The integration example shows how Sobol reduces integration error compared to pseudo-random sampling for smooth functions.

In production pricing engines, this same structure scales naturally:

Generate Sobol vectors in higher dimensions

Apply inverse Gaussian transform

Apply Cholesky or PCA factorisation

Feed into path construction (optionally Brownian bridge)

This pattern gives you deterministic, low-variance Monte Carlo with minimal memory overhead and excellent performance characteristics.

Useful Links

Quant Research

SSRN Research Papers - https://ssrn.com/author=1728976

GitHub Quant Research - https://github.com/nburgessx/QuantResearch

Learn about Financial Markets

Subscribe to my Quant YouTube Channel - https://youtube.com/@AlgoQuantHub

Quant Training & Software - https://payhip.com/AlgoQuantHub

Follow me on Linked-In - https://www.linkedin.com/in/nburgessx/

Explore my Quant Website - https://nicholasburgess.co.uk/

My Quant Book, Low Latency IR Markets - https://github.com/nburgessx/SwapsBook

AlgoQuantHub Newsletters

The Edge

The ‘AQH Weekly Edge’ newsletter for cutting edge algo trading and quant research.

https://bit.ly/AlgoQuantHubEdge

The Deep Dive

Dive deeper into the world of algo trading and quant research with a focus on getting things done for real, includes video content, digital downloads, courses and more.

https://bit.ly/AlgoQuantHubDeepDive

|  |

Feedback & Requests

I’d love your feedback to help shape future content to best serve your needs. You can reach me at [email protected]